Open-Source Image Generation Models in 2026: A Developer's Guide

You want to generate images in your app. The obvious answer six months ago was "call the OpenAI API and move on." But between $0.05 per image at scale, the latency of a round-trip to a GPU cluster in Virginia, and the privacy headache of sending user prompts through a third party, the obvious answer is starting to look expensive.

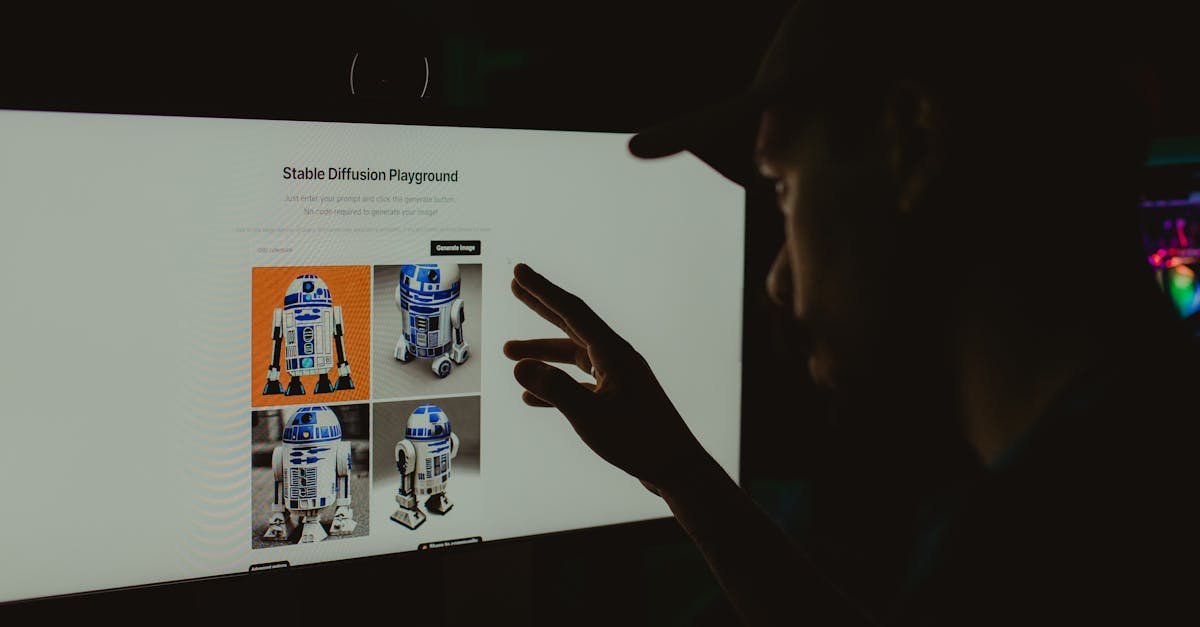

The open-source image generation ecosystem has moved fast in 2026. FLUX.2 set a new quality bar. A pure C implementation from the creator of Redis runs FLUX on a Raspberry Pi. A Rust CLI called Mold generates images with one command and zero Python dependencies. The question isn't whether open-source models can compete — it's which one you should actually use, and whether your GPU can run it without melting.

This guide maps the landscape as it actually exists right now: what's production-viable, what's experimental, what your hardware needs, and what the licenses actually allow.

The 2026 Open-Source Image Gen Landscape

The field has narrowed to two poles, plus a growing list of specialized alternatives.

FLUX.2 — released by Black Forest Labs (founded by the original Stable Diffusion team) in November 2025 and expanded through early 2026 — is the current quality leader. Its headline feature is text rendering: where previous open-source models turned "a sign that says WELCOME" into glyph soup, FLUX.2 renders legible text reliably. The model family spans from the 32B-parameter FLUX.2 [dev] down to the 4B-parameter [klein] variant that runs on consumer GPUs. The [klein] release in January 2026 was the turning point — sub-second generation on an RTX 4070, Apache 2.0 license, under 13GB VRAM for the 4B model.

Stable Diffusion 3.5 — released by Stability AI as their answer to FLUX — holds ground on artistic stylization and benefits from the massive SD ecosystem (ControlNet, LoRA, IP-Adapter, literally thousands of community fine-tunes). SD3.5 Large needs 16GB minimum, but SD3.5 Medium runs on 8GB cards. The license is the friction point: free commercial use under $1M annual revenue, but you need an Enterprise license above that threshold. The earlier license whiplash (SD3 briefly had a restrictive license before being walked back) eroded community trust, but the model itself is solid.

Beyond the big two, Z-Image Turbo and Qwen-Image have emerged as credible alternatives focused on specific niches — Z-Image on speed, Qwen-Image on multi-modal understanding. Neither has the ecosystem depth of SD or the raw quality of FLUX, but both are improving fast.

The practical takeaway: FLUX.2 for photorealism and text-heavy outputs, SD3.5 for artistic flexibility and community tooling, and the newcomers for specific performance-constrained use cases.

But the model landscape is only half the story. The other half is how you actually run these things without a $30,000 workstation.

The Lightweight Revolution: Mold CLI, antirez/C, and CPU-First Inference

For most of 2025, running an open-source image model meant installing PyTorch (2GB download), CUDA toolkit, xformers, a Python environment, and probably ComfyUI just to get an image out. Three developers looked at this stack and decided it was absurd.

Mold CLI is a single Rust binary. mold run "a cat on a rocket" — that's it. It auto-downloads models from Hugging Face on first run, supports FLUX, SDXL, SD3.5, SD1.5, Z-Image, and experimental FLUX.2 Klein and Qwen-Image backends, and runs on as little as 1.7GB VRAM for SD1.5. Built on Hugging Face's candle ML framework, it has zero Python dependencies, no libtorch, no ONNX runtime. Pipe-friendly stdin/stdout for chaining, batch generation with incrementing seeds, PNG metadata embedding. MIT licensed.

The VRAM requirements tell the story:

Model | Steps | VRAM | Use Case |

|---|---|---|---|

| 25 | ~1.7 GB | Lightweight, huge ControlNet ecosystem |

| 28 | ~2.7 GB | Efficient high-quality |

| 4 | ~5.1 GB | Ultra-fast general purpose |

| 25 | ~6.7 GB | Photorealistic |

| 4 | ~7.5 GB | Fast, modern quality |

antirez/iris.c — Salvatore Sanfilippo's pure C implementation of FLUX.2-klein-4B — is the most impressive piece of systems programming in the AI space this year. Zero dependencies beyond the C standard library. Three backends: Metal for Apple Silicon, BLAS for CPU acceleration (~30x speedup), and a generic pure-C fallback. Memory-mapped weights reduce peak RAM from 16GB to 4-5GB. It runs on a Raspberry Pi 4 with 8GB. On an M3 Max, it matches PyTorch performance at 512×512 resolution (13 seconds) and is only slightly slower at 1024×1024 (29s vs 25s). The entire text encoder and VAE decoder are embedded in the executable.

antirez built it with Claude Code and documented the process — using an IMPLEMENTATION_NOTES.md file to maintain context across long AI coding sessions. The HN thread hit 405 points. The takeaway isn't just "FLUX runs in C now." It's that the ML infrastructure bloat — PyTorch, CUDA, Python packaging, gigabytes of framework dependencies — is optional. You can ship image generation as a single static binary.

Between Mold and flux2.c, the barrier to embedding image generation in an application dropped from "provision a GPU instance" to "link a library." If you're a developer who's been waiting for image gen to feel like a normal software dependency rather than a ML research project you babysit, this is the shift that matters.

ComfyUI in Practice: What the Node Graph Doesn't Tell You

ComfyUI is the de facto workflow tool for open-source image generation. It's powerful. It's also the most common source of "why does my image look worse than the demo?" confusion.

The main culprit in 2026 has been Dynamic VRAM, a feature ComfyUI enabled by default in March 2026 to address the reality that most users don't have 24GB GPUs. It works by copying only necessary model weights to VRAM at the moment they're needed, rather than pre-allocating everything. The speed improvement is real — 3x faster on some workflows. But the community has documented a pattern: Dynamic VRAM can silently degrade quality by offloading weights mid-generation that the model still needs for fine detail passes. You don't notice until you export at full resolution and the fine textures are missing.

The fix isn't to disable Dynamic VRAM — it genuinely helps on 12GB cards. It's to understand what it does and when to use manual control instead:

For FLUX.2 at 1024×1024 on 12GB: Use

--enable-dynamic-vramwith--fp16-intermediates(added in ComfyUI v0.18.0, March 2026). This halves intermediate precision memory with minimal quality impact.For SD3.5 Large on 12GB: Manual tiled VAE decode — split the latent image into tiles, decode each separately, stitch at the end. Slightly slower, but no quality loss.

For any model at max quality: Disable Dynamic VRAM entirely, use a low step count (4-8 with distilled models), and accept the VRAM ceiling of your card.

The ComfyUI ecosystem now includes tools like ComfyUI-ReservedVRAM (dynamically adjusts reserved memory) and Block Swap for the Wan video wrapper (reduces VRAM by 40%+ by offloading inactive model blocks). If you're building production workflows, these aren't optional — they're the difference between "it works on my machine" and "it works when 50 people use it simultaneously."

The broader lesson: the node graph abstracts away the hardware reality. Understanding what's happening under the abstraction is what separates usable output from "why does this look weird?"

The Licensing Minefield: What "Open Source" Actually Means in 2026

If you're building a product, the license matters more than the benchmark scores. Here's the reality in 2026:

Model | License | Commercial Use |

|---|---|---|

FLUX.2 [klein] 4B | Apache 2.0 | Yes, unlimited |

FLUX.2 [dev] 32B | FLUX Non-Commercial | No, without BFL agreement |

FLUX.2 [pro/flex/max] | Proprietary API | API only |

SD 3.5 (Community) | Stability AI Community License | Yes, under $1M annual revenue |

SD 3.5 (Enterprise) | Custom | Negotiated |

SD 1.5 / SDXL | CreativeML Open RAIL-M | Yes, unlimited |

Mold CLI | MIT | Yes, unlimited |

antirez/flux2.c | MIT | Yes, unlimited |

The pattern: the small, distilled models trend toward genuinely open licenses (Apache 2.0, MIT). The large, highest-quality models trend toward restricted or proprietary licenses. This creates a practical decision tree for developers:

Commercial product with no revenue cap concerns: FLUX.2 [klein] 4B (Apache 2.0) or SDXL (Open RAIL-M) for unlimited free use.

Startup under $1M revenue: SD 3.5 Community License works, but plan for the licensing cliff when you cross the threshold.

Internal tool, no distribution: Everything is fair game — use whatever produces the best quality on your hardware.

Need the absolute best quality and can pay: FLUX.2 [pro] API at $0.05/image is still cheaper than Midjourney's enterprise pricing.

The SD3 license whiplash — where Stability AI briefly introduced a restrictive license before walking it back — is a reminder that open-source AI licensing is still unstable. If you're building a product, bet on models with Apache 2.0 or MIT licenses and a track record of not changing them. The 4B FLUX.2 [klein] under Apache 2.0 is currently the safest bet in open-source image generation.

Enterprise Reality Check: Orchestration Over Single Models

Enterprises aren't picking one model. The a16z 2026 enterprise AI report documented what practitioners already knew: organizations deploy an average of 14+ models, not one. The investment isn't in any single model — it's in the orchestration layer that routes prompts to the right model based on task, cost, and quality requirements.

This has practical implications for developers:

Don't build around one model's API semantics. The model that's best today won't be in 12 months. Abstract the generation interface so you can swap FLUX for whatever comes next.

The ComfyUI node graph isn't just for hobbyists. Enterprises are running ComfyUI clusters because the workflow abstraction makes model-swapping a drag-and-drop operation rather than a code change.

Hugging Face's model hub is the real platform. Whether you use Mold (which pulls from HF), diffusers, or a custom pipeline, the model weights live on Hugging Face. Build your tooling to consume from there, not from any single model provider.

This fragmentation looks like a problem. It's actually the ecosystem's strength — it means no single company controls the direction of open-source image generation. FLUX.2 leads today. Something else will lead in 2027. The orchestration code you write now should outlast whichever model is currently on top.

What Developers Should Bet On

The open-source image generation landscape in 2026 is healthier than it's ever been, but it's also more complex. Here's what I'd actually bet on if I were building today:

Bet on the lightweight tools. Mold CLI and flux2.c represent the direction of travel — image generation as a library, not a research artifact. The PyTorch monolith is not the long-term deployment story. Start with Mold for rapid experimentation and flux2.c for embedded deployment.

Bet on FLUX.2 [klein] 4B for production. Apache 2.0, consumer GPU-friendly, sub-second generation, and text rendering that actually works. It's the first open-source model where "good enough for a production feature" isn't a compromise.

Bet on the orchestration layer, not the model. The model ranking will change. The integration surface — how you route prompts, manage VRAM across concurrent requests, cache embeddings, and version your workflows — is the part you'll still be maintaining in two years.

Don't bet on "one model to rule them all." The ecosystem is fragmenting by use case — FLUX for photorealism, SD for artistic flexibility, specialized models for speed and domain-specific tasks. This isn't a temporary phase. It's the stable state of the ecosystem. Embrace the diversity and build your pipeline to handle it.

The open-source image generation story of 2026 isn't that the models caught up to proprietary alternatives — they haven't quite, in raw photorealism, though the gap is small. It's that the tools for using them crossed a threshold from "ML experiment" to "software dependency." You can build with them the same way you build with any other library: install, import, generate. That's the shift worth paying attention to.