Multi-Agent AI Systems Architecture in 2026: What Actually Works

Introduction

If you spend any time around AI builders in 2026, you will hear the same phrase over and over: "we need a multi-agent system." It sounds sophisticated. It sounds scalable. It sounds like the natural next step once a single agent starts hitting limits.

The problem is that most teams reach for multi-agent architecture too early. They add planners, reviewers, tool specialists, and memory layers before they have even proved that one strong agent with good tools cannot do the job. That is how a clean prototype turns into an orchestration problem.

The better question is not whether multi-agent systems are real. They are. Anthropic has shown that a multi-agent research workflow can outperform a single agent on broad research tasks, and Google Research has shown that agent systems work best when the workload is genuinely parallel rather than sequential. The real question is simpler: when does a multi-agent AI systems architecture create leverage, and when does it just create overhead?

This guide answers that directly. You will see when to stay single-agent, when to move to orchestrator-worker, and which controls matter if you want a multi-agent system that survives contact with production.

Why Most Multi-Agent AI Systems Architecture Advice Breaks Down in Production

Most published advice on multi-agent AI systems architecture is still demo-first. It shows a planner agent, a coder agent, a reviewer agent, and a memory agent passing tasks around in a neat diagram. That diagram is useful right up until the first real failure.

In production, the bottleneck is rarely "we need more agents." It is usually one of these:

Agents disagree because each saw different context.

A handoff loses important state because the schema was loose.

Two workers duplicate the same work because the planner boundary was fuzzy.

The system enters a retry loop that looks intelligent but burns tokens and time.

That is why recent engineering guidance from the GitHub blog on multi-agent workflows reads more like distributed-systems advice than prompt-engineering advice. The same pattern shows up in Microsoft's multi-agent patterns guidance: typed payloads, scoped permissions, explicit routing, and human approvals matter because a multi-agent system is not one mind. It is a coordination system.

One analogy helps here. Designing a multi-agent system before you need one is like hiring a room full of specialists before you have written the first operating manual. You do not get expertise. You get meetings.

That is the real reason much multi-agent advice breaks down: it treats specialization as the hard part, when coordination is the hard part. That leads naturally to the first design decision you should make.

The Decision Tree: Single Agent, Orchestrator-Worker, or Verified Multi-Agent System?

The fastest way to improve an agent system is usually to choose the smallest architecture that fits the task.

Stay single-agent when the task is mostly sequential

If one agent can reason through the task with the right tools, a multi-agent architecture is usually unnecessary. This is especially true for:

Linear workflows

Small or medium context sizes

Tasks where the same evidence needs to be visible end to end

Low-latency user experiences

A single capable agent with retrieval, tool use, and strict output schemas often beats a team of smaller agents because it avoids handoff loss and coordination tax.

Use orchestrator-worker when the work can branch cleanly

This is the pattern that keeps showing up in successful systems. An orchestrator breaks the work into bounded jobs, then specialist workers execute those jobs independently. Anthropic's multi-agent research system writeup is the clearest example: a lead agent delegates focused subproblems, then synthesizes the returned results.

Use this pattern when:

The problem can be broken into independent subquestions

Workers do not need to constantly negotiate with each other

Parallelism matters more than deep shared reasoning

You can define a strong return contract for each worker

Add verification only when mistakes are expensive

A reviewer, verifier, or policy-checking agent can be valuable, but only when the extra cost is cheaper than a bad answer. Think code generation, risky tool actions, compliance-sensitive tasks, or customer-facing outputs where hallucinations have a real downside.

This is the practical decision tree:

Start with one agent and good tools.

Add workers only if the task branches cleanly.

Add verification only if failure cost justifies a second pass.

That sounds less glamorous than "agent swarms," but it is the architecture logic that keeps showing up in systems that actually last. Once you choose that posture, the useful patterns get much easier to spot.

The Three Patterns That Actually Work

If you strip away the hype, three multi-agent patterns consistently survive real-world use.

1. Parallel workers for breadth-first work

This pattern is ideal for research, monitoring, comparison tasks, and any job where workers can explore different slices of the problem at the same time. It is the clearest case where multi-agent AI systems architecture creates real upside instead of symbolic complexity.

Google Research's recent work on scaling agent systems points in the same direction: agent systems tend to help when the workload is parallelizable, and often disappoint when the workload is tightly sequential. That sounds obvious in hindsight, but it is the mistake many teams keep making.

2. Reviewer loops for quality control

This is not the same thing as letting agents argue forever. A useful reviewer loop is bounded. One agent creates, one agent checks against a rubric, and the system either fixes once or escalates. Anything more open-ended tends to invite drift.

Reddit threads about unstable multi-agent loops keep returning to the same complaint: without hard limits, reviewer systems become doubt machines. They keep questioning, rewriting, or second-guessing without moving the answer forward.

3. Bounded specialists for tool-heavy work

Some workers exist not because they "think differently," but because they hold a narrow tool scope. A retrieval worker, a browser worker, or a policy-check worker can be useful when isolation reduces risk. In those cases, the agent boundary is really a permission boundary.

That last point matters more than most architecture diagrams admit. Many so-called specialist agents are better understood as constrained services with language interfaces. Once you accept that, the next question is not "how many agents?" but "what controls keep them from tripping over each other?"

The Controls That Make Multi-Agent Systems Survivable

This is the part many articles rush past, even though it decides whether the system is maintainable six weeks later.

Use typed handoff contracts

Every delegation should have a defined input, a defined output, and a bounded purpose. Loose natural-language handoffs feel flexible, but they also make failure analysis miserable. If a worker can return anything, the orchestrator cannot reliably validate anything.

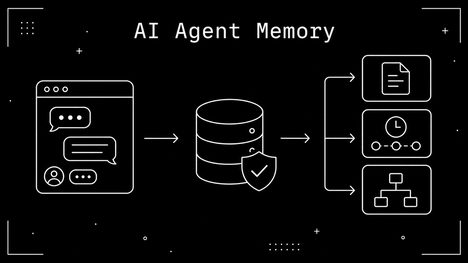

Checkpoint state instead of passing giant context blobs

Passing full conversation state to every worker looks safe, but it often creates noise and cost. A better pattern is to checkpoint the minimum state a worker needs, then log the returned artifact separately. That makes replay and debugging dramatically easier.

Trace everything

If you cannot answer "which agent decided this, with what input, using which tool, at what cost?" then your system is not ready for serious production use. Observability is not an enterprise add-on for multi-agent systems. It is the operating system.

Set budgets and stop conditions

You need limits on tool calls, recursion depth, retries, and wall-clock time. Otherwise a multi-agent architecture turns into an expensive argument between plausible components.

Add human gates for high-impact actions

Microsoft's guidance is especially strong here: use approval steps when the action can change data, trigger external systems, or create compliance risk. Multi-agent systems multiply autonomy surfaces. That means they also multiply the need for policy boundaries.

If you remember only one line from this section, make it this: a multi-agent system is less like "one smart assistant with friends" and more like "a small distributed system with language interfaces." That framing gives you a cleaner reference architecture to build from.

A Reference Architecture for 2026 That Scales Without Theater

The most defensible multi-agent AI systems architecture in 2026 is surprisingly conservative:

Entry agent: receives the task, classifies intent, and decides whether this is a single-agent or delegated job.

Planner or router: only if the job branches; otherwise the entry agent continues alone.

Worker pool: each worker gets a narrow brief, scoped tools, and a strict output schema.

Verifier: optional and only attached to high-risk or high-value tasks.

Trace layer: captures prompts, tool calls, outputs, timings, and failure reasons.

Memory layer: stores durable artifacts, not giant unstructured transcripts.

Human approval gate: sits in front of destructive or externally visible actions.

That architecture is boring in the best possible way. It avoids the fantasy that every task needs a committee of agents negotiating in real time. Instead, it assumes that delegation is expensive and should be earned.

It also gives you a clean implementation path:

Stage | What to build | What to avoid |

|---|---|---|

Stage 1 | One strong agent with tools and schemas | Premature planner/reviewer stacks |

Stage 2 | Orchestrator-worker for parallel subproblems | Shared free-form memory across all workers |

Stage 3 | Verification for risky outputs | Infinite critique loops |

Stage 4 | Policy gates, tracing, and replay | "Autonomous" actions without audit trails |

If your team is still proving product value, this staged approach is almost always better than starting with a full agent mesh. That is the right place to land the argument.

Start Smaller Than the Hype

The practical lesson from 2026 is not that multi-agent systems are overhyped. It is that they are often misapplied. The best multi-agent AI systems architecture is not the one with the most roles on the diagram. It is the one that introduces coordination only where coordination pays for itself.

So start with a single agent. Add workers only when the task is truly decomposable. Add verification only when the downside of being wrong is meaningful. And treat observability, contracts, and approvals as first-class design elements, not cleanup work for later.

If you do that, you will build something much more valuable than an impressive demo. You will build a system your team can actually run, debug, and trust. If you want a useful next step, pair this with 15 Agentic AI Chrome Extensions That Actually Work in 2026 and review your current workflow against the smallest architecture that can still do the job.