How to Persist AI Agent Memory Across Sessions

Your agent looked solid in the demo. It remembered the user's preferences, carried context across tool calls, and even recovered from a follow-up question without breaking stride. Then the process restarted, a new worker picked up the request, and the agent forgot everything that mattered.

That failure usually is not a model problem. It is a memory architecture problem. Most teams treat chat history, session replay, and durable memory as the same thing, so they end up storing too much of the wrong data and too little of the right data.

If you want to persist AI agent memory across sessions in 2026, the simplest reliable approach is to split the system into three layers: session state for the current run, durable memory for facts worth keeping, and memory hygiene for correction, expiration, and scope control.

TL;DR: Start with a session backend, a small durable memory table, and explicit rules for what gets written or discarded. Add vector or graph retrieval later only when your recall problem actually becomes semantic or relational.

Once you separate those layers, the restart bug stops looking mysterious and starts looking like a normal engineering problem.

Why agents lose memory after restarts even when "memory" is turned on

The first thing to clarify is that context is not durable memory. As Redis points out in its guide to AI agent memory architecture, context windows reset on every API request, so they cannot preserve identity, preferences, or learned behavior across time on their own. A bigger context window makes forgetting slower, not impossible.

Framework features help, but they solve different parts of the problem. The OpenAI Agents SDK session docs describe sessions as a way to maintain conversation history across multiple agent runs, and the same docs show backends like file-based SQLite, Redis, and SQLAlchemy-backed storage. That is useful state management, but it is still session-centric: it keeps the conversation available, not a clean long-lived memory model for facts that should survive beyond one chat thread.

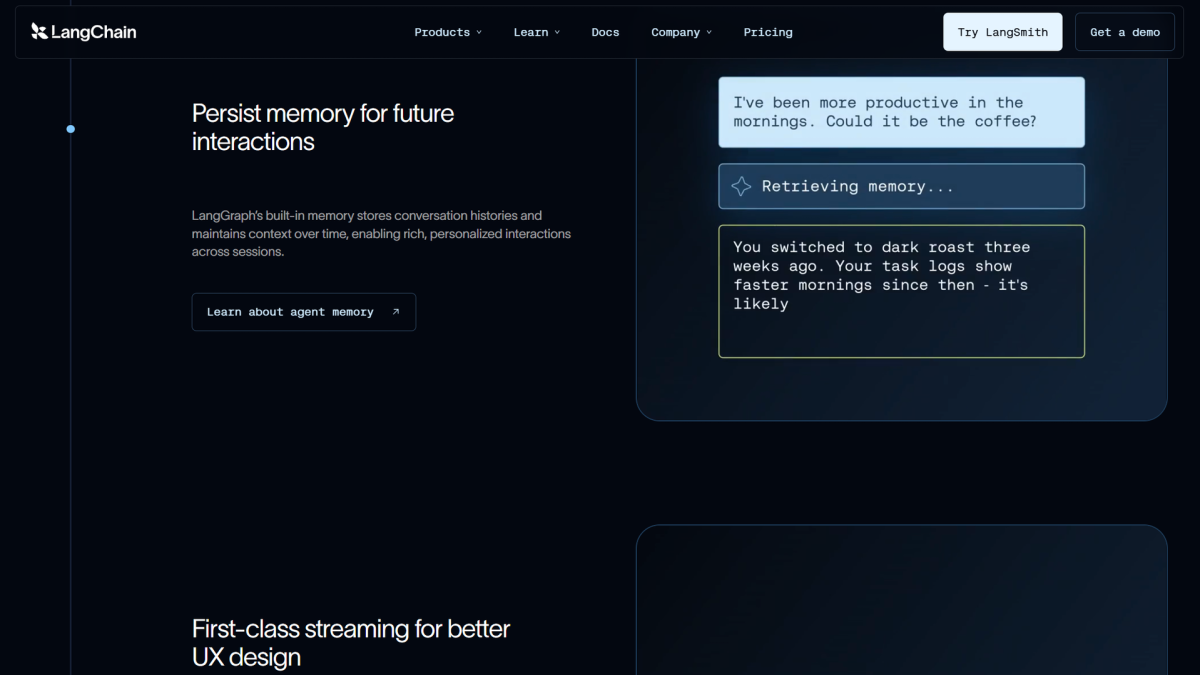

The same distinction shows up in LangGraph persistence. Checkpointers persist graph state into threads, which is great for fault tolerance, debugging, and conversational continuity. But LangGraph's own docs are clear that checkpoints alone do not let you share information across threads; for that, you need a store layered beside the checkpointer.

That is why agents often seem smart inside one run and unreliable across sessions: the app persisted thread state, but it never designed a durable memory layer with its own rules and boundaries. The fix starts by treating memory as separate layers, not one giant transcript.

The three layers you actually need: session state, durable memory, and memory hygiene

Think of AI agent memory architecture the same way you would think about application state in a mature backend.

Session state is RAM. It holds the active conversation, tool outputs, intermediate reasoning products, and short-lived task state for the current run or thread.

Durable memory is your database. It keeps the small set of user facts, project facts, summaries, and standing rules that should survive restarts and matter again later.

Memory hygiene is your governance layer. It decides what gets promoted into durable memory, what expires, what gets corrected, and what should never be stored in the first place.

This is also the cleanest way to reason about session memory vs long-term memory for AI agents. Session state helps the agent continue the current conversation. Long-term memory helps it resume the right relationship or workflow later. Hygiene is what keeps those two from collapsing into a junk drawer.

Here is the smallest useful shape:

# Minimal layered memory flow for a production agent

User input

-> session state for the active run

-> extraction step for candidate memories

-> durable memory store for facts worth keeping

-> retrieval step that pulls back only scoped, relevant memories

-> prompt assembly for the next run

Without that third layer, teams usually over-store raw history, reload stale facts, and pay for it with higher token cost and worse answers. Once the layers are explicit, the implementation choices become much easier.

Choosing a persistence path: OpenAI Sessions, LangGraph checkpoints plus stores, or your own DB-backed layer

You do not need a universal answer. You need the smallest persistence path that matches your stack and failure mode.

Option | Good fit | What it gives you | What it does not solve by itself |

|---|---|---|---|

You are already using the Agents SDK and want stateful multi-turn execution | Built-in session memory plus SQLite, Redis, SQLAlchemy, MongoDB, and other session backends | Durable fact modeling, invalidation rules, and cross-scope recall strategy | |

LangGraph persistence + store | You are building in LangGraph and need checkpointing plus shared memory | Thread checkpoints for run continuity and a store for information shared across threads | A framework-agnostic memory contract and disciplined write rules |

Your own DB-backed layer | You want portability or your memory needs are still simple | Full control over schemas, scoping, retention, and query cost | Out-of-the-box extraction, embedding, or semantic search helpers |

If you are already inside OpenAI Sessions, use them for session continuity and keep durable memory separate. If you are in LangGraph, keep the same split: checkpoints for thread state, store for cross-thread memory. If you are framework-agnostic, a Postgres or SQLite table with clear write and retrieval rules is often the fastest way to stop losing memory across sessions.

This is also where the LangChain long-term memory docs are useful conceptually: they frame long-term memory as storing and recalling data across conversations and sessions, which is exactly the boundary many tutorials skip. Once you choose the right persistence layer, the next question is what deserves to be written there at all.

How to persist AI agent memory across sessions without bloating context

The trick is not to store more. The trick is to store less, but store it intentionally.

Start with three write buckets after each run:

Discard: transient chatter, repeated tool logs, one-off reasoning crumbs.

Keep in session only: short task context that matters for the current thread but not next week.

Promote to durable memory: stable preferences, standing constraints, canonical task state, and compact summaries of important outcomes.

For most teams, a structured store beats a transcript store. A durable record should answer a question like "what changed, for whom, in what scope, and until when?" better than raw chat history can.

-- Durable memory records keyed by owner, scope, and freshness

CREATE TABLE agent_memory (

id TEXT PRIMARY KEY,

owner_id TEXT NOT NULL,

scope TEXT NOT NULL,

memory_type TEXT NOT NULL,

content_json TEXT NOT NULL,

source TEXT,

confidence REAL DEFAULT 1.0,

last_verified_at TIMESTAMP,

expires_at TIMESTAMP,

superseded_by TEXT

);

That schema is deliberately boring, and that is the point. Before you reach for vectors, prove that you can reliably store a user preference, an active project summary, or a tool-derived fact with clear ownership and expiration.

Your retrieval path should be just as selective. Query by owner, scope, freshness, and type first. Only then decide whether you need semantic ranking.

# Build prompt memory from the smallest relevant durable set

from datetime import datetime, timezone

def select_memories(rows, owner_id, scope):

now = datetime.now(timezone.utc)

chosen = []

for row in rows:

if row["owner_id"] != owner_id:

continue

if row["scope"] not in {scope, "global"}:

continue

if row.get("expires_at") and row["expires_at"] <= now:

continue

if row.get("superseded_by"):

continue

chosen.append(row)

return chosen[:5]

The operational rule is simple: inject the smallest memory set that changes the next decision. If five facts do the job, do not pass fifty. If a memory can be derived again from the source of truth, store a summary or key instead of the whole artifact. That one rule does more for cost and quality than most memory libraries.

When facts change, overwrite or supersede them. If a user updates a preference or a task state flips from open to done, the old memory should stop competing for retrieval. That is the difference between memory that helps and retrieval that just looks busy. If you are also deciding between memory and retrieval-heavy patterns, when to use RAG vs memory for AI agents is the comparison worth making next.

Once the write and retrieval contract is small and explicit, you can make sane decisions about cost and scale.

Cost, latency, and scope: when SQLite is enough and when you need vector or graph retrieval

Many teams overengineer long-term memory for AI agents before they have a real retrieval problem. Community discussions around LangGraph persistence keep landing on the same practical point: a local database is often enough until you genuinely need fuzzy recall.

SQLite or Postgres is usually enough when:

You mostly retrieve by user, workspace, ticket, or task scope.

The memory count per scope is still modest.

You care more about correctness and auditability than semantic recall.

Most durable items are structured facts, summaries, or explicit state transitions.

Move to vector retrieval when:

Users refer to older information in many different phrasings.

Memory volume is large enough that keyword or scoped lookup is no longer sufficient.

You need "find similar prior situations" rather than "load the current known facts."

Move to graph-style retrieval when:

Relationships matter more than plain similarity.

The agent must reason over entities, dependencies, ownership, or multi-hop links.

You need to answer questions like "which customer, project, and blocker are connected to this incident?"

The Redis guide to AI agent memory is useful here because it frames the jump clearly: short-term memory and long-term memory solve different problems, and semantic retrieval becomes valuable only when the memory layer grows beyond deterministic lookup. Until then, the cheapest production system is often the best one because it is easier to debug.

That is also why "SQLite first, vectors later" is not a toy strategy. It is a way to force the memory contract to become clear before you add infrastructure that can hide weak assumptions.

Common failure modes: stale facts, wrong scopes, and retrieval that looks like memory but is not

The most common failure mode is stale memory. A user changes a preference, a workflow changes, or a project status moves on, but the old fact never gets retired. Then the agent retrieves both versions and answers with false confidence. Store last_verified_at, add expirations where appropriate, and make contradiction handling part of the write path instead of a future cleanup project.

The second failure mode is wrong scope. Teams store memory under one flat namespace, then wonder why an agent leaks customer A's preferences into customer B's reply. Scope every memory by owner and context at minimum. In practice, that usually means user, organization, project, and task boundaries, not one giant "memories" bucket.

The third failure mode is confusing retrieval with memory. Dumping transcripts into a vector index is not the same as building memory. Real memory needs promotion rules, correction paths, and confidence about what is still true. LangGraph's split between checkpoints and stores is useful precisely because it forces you to ask what should remain thread-scoped and what should outlive the thread.

The fourth failure mode is storing every tool log forever. That makes prompts expensive and retrieval noisy. Keep raw logs in your operational system of record if you need them, but promote only summaries or stable facts into the agent-facing memory layer. memory pruning strategies for AI agents is where that discipline usually pays for itself fastest.

If you avoid those four traps, the memory system becomes much more boring, and boring is exactly what you want in production.

Start with one memory contract, not one more database

When teams ask how to persist AI agent memory across sessions, the right answer is to start with one contract:

What belongs in session state only?

What facts deserve durable storage?

How does a fact expire, change, or get corrected?

What is the smallest memory set the agent actually needs on the next run?

Everything else is an implementation detail.

The best AI agent memory architecture is usually not the one with the most components. It is the one that survives a restart, keeps scopes clean, and recalls the right five things instead of the last five hundred. Build that first, and your agent will feel more consistent immediately without turning memory into an infrastructure hobby.